The driverless car & You

In the past year, Google made headlines with its self-driving car, this funny little beetle-like thing with a koala-face which, according to Google themselves, is indeed going to be a serious bid for genuinely autonomous cars – they’ll begin road testing in the beginning of 2015, and the vehicle may be commercially available within ten years.

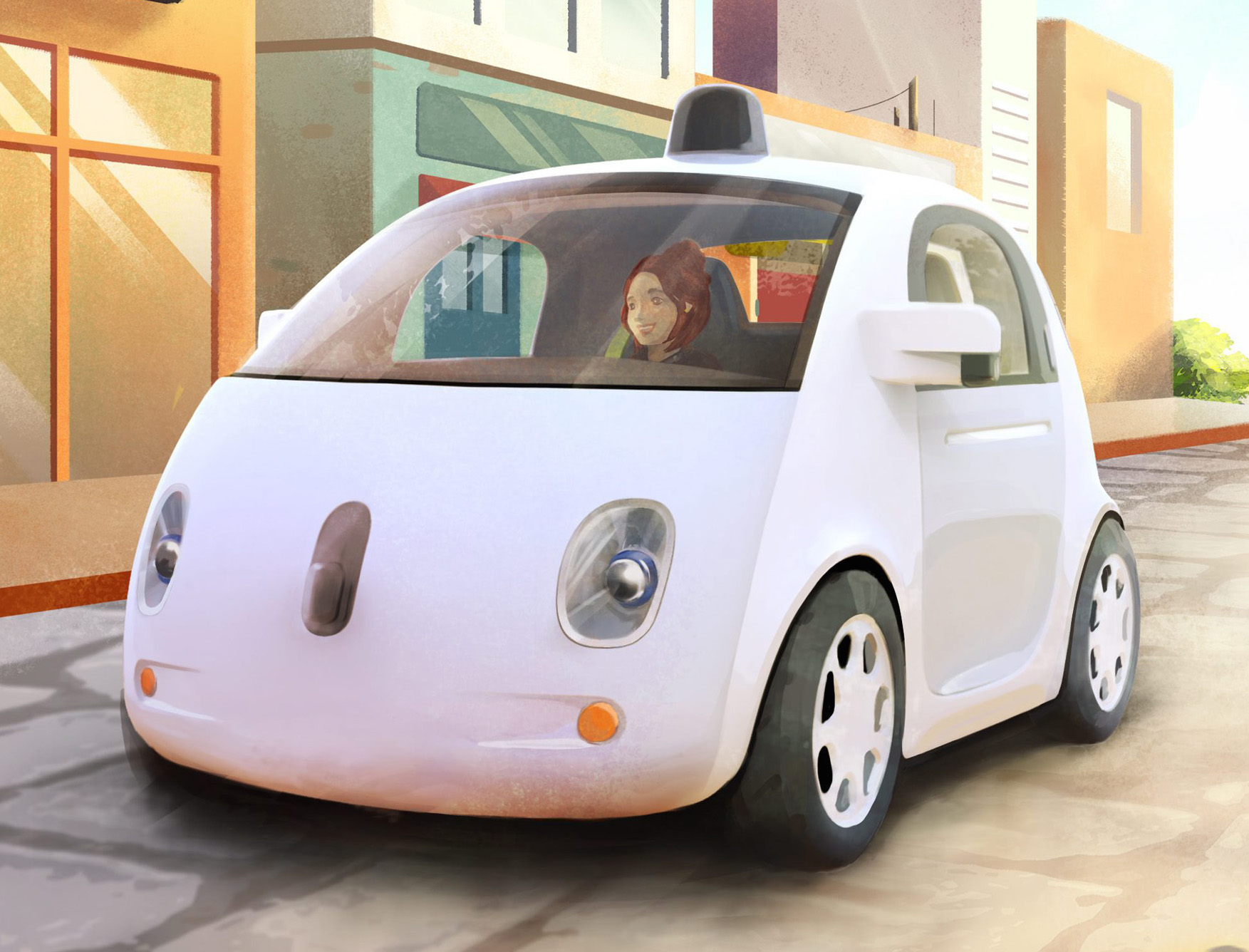

Well, let’s do what we’ve done before, and take a look at the hyped technology of the alleged near future, from the perspective of human experience and behavior. The basic question is what a self-driving car even is, from a user perspective. Look at that image there – Google has decided that a robocar is a regular (compact) car without controls, but maybe that’s not such a great idea. Of course, one might argue that it’s cute, and therefore will put people at ease (though it should be noted that a number of electric cars tried this tactic – but only when an e-car was made that actually seemed to be a real car, the Tesla, did we see notable success) but why does it have front-facing seats, and wing mirrors?

Isn’t this going to subconsciously lead the occupant to believe that they should pay attention to traffic (especially if they’ve driven a real car before), and won’t that conflict with the fact that, if you do see something in the mirrors or out the front you perceive as a danger, you don’t have any controls – you can watch but you can’t act?

The risk of this decision is that the Google robocar will be seen as a regular car which has had something people trust removed, i.e. the steering wheel, and something else installed that they don’t, namely a computer, a.k.a. the thing on your desk – or in your pocket – that mostly works but God (or, more accurately, a sufficiently nerdy friend) help you when it doesn’t. Google calls the project a “moonshot” but the change may be percived as incremental, which won’t put people in a mindset to grasp the wider implications of the idea.

“OK”, you say, “so what would you suggest, guy who forgot his driver’s licence when you went to test-drive a Tesla?” – well, here’s the thing: From a people perspective, self-driving cars are two very different things, depending on whether we’re talking about something that goes on the road tomorrow, or something that comprises most or all of the “personal conveyance” fleet in a future a bit further off.

The first kind, which is the kind Google seems to propose, has to operate in traffic mostly made up of people-driven cars, on regular roads. This means that the car can’t take advantage of such things as communciating directly with the other cars, and it has to also contend with motorcycles, bicycles, pedestrians, pets and, of course, unpredictable drivers – and unlike a human driver it can’t rely on things like eye contact or body language to any significant degree.

In other words, that kind of robocar is less an autonomous conveyance, and more a very sophisticated autopilot. Its major pain point, as far as people wanting to use it goes, is going to be what happens when something occurs that the computer didn’t account for – some suggest things like emergency controls/brakes but in such situations there may only be fractions of a second to react, and if you aren’t actually driving you may not be paying attention right at that moment. In any event, this is an issue that will have to be addressed before people will feel safe using it.

The second kind would be more along the lines of what Tom Cruise drives in “Minority Report”, which isn’t so much a car as a conveyor belt with leather seats – this kind of transport will be part of an interconnected network, sort-of like a digital railway, and will be truly autonomous on the road; you’ll tell it the destination and it’ll take care of everything itself, because at this point, the roads will be built for it and not for you. The car will know them better than you ever could, not least because the digital network will be a significant integrated part of them.

However, in that scenario all cars will be obliged to run on that digital rail net. Essentially, the cars will be autonomous, but the drivers won’t – they’ll be the computer’s passengers. This will be a huge paradigm shift in how people perceive transportation, and those don’t come easy, not to mention that in the event of an accident, the responsibility won’t be on the driver-passenger, but on the system, another major paradigm shift.

To sum up, the challenge of the first kind is that it will probably still need for the driver to be in charge, at least in a supervising capacity, and the challenge of the second one is that the driver won’t ever be in charge, not even in an emergency. Now, Google probably aren’t expecting their robocar to be more than a prototype, a step on the way to computerized traffic – but as even these few issues we’ve touched upon here indicate, addressing the human factor will be every bit as important as the techological aspect.